STAT-12: Verification/Validation Sampling Plans for Proportion Nonconforming

This is part of a series of articles covering the procedures in the book Statistical Procedures for the Medical Device Industry.

Purpose

This procedure provides tables and instructions for selecting sampling plans for FDA process validation and design verification to ensure they are based on a valid statistical rationale. These determine the samples size and acceptance criteria. They make confidence statements like 95% confidence the process or device is more than 99% reliable or conforming. These sampling plans are often referred to as confidence-reliability sampling plans. They are for the statistical property proportion conforming or nonconforming. They require that requirements be established for individual units of product. They apply to design verification (STAT-04), process validation (STAT-03), validation of a pass/fail inspections (STAT-08) and CAPA effectiveness checks (STAT-07).

Appendices

- Attribute Single and Double Sampling Plans for Proportion Nonconforming

- Variables Single and Double Sampling Plans for Proportion Nonconforming

- Selecting Sampling Plans for Proportion Nonconforming using Software

- Lower Confidence Limit for Percent Conforming—Attribute Data

- Lower Confidence Limit for Percent Conforming—Variables Data

- Sampling Plans for Proportion Nonconforming

Highlights

Attribute Sampling Plans

- Appendix F of STAT-12 contains tables like the one shown below for 95%/99% – 95% confidence of more than 99% reliable or conforming. This is equivalent to 95% confidence of less than 1% nonconforming. This table contains attribute single and double sampling plans.

- 95% confidence of more than 99% conformance means there is a 95% chance of rejecting a 99% conforming product/process. 99% conforming is therefore an unacceptable level of quality designed to fail.

- All the above sampling plans, if they pass, allow the same confidence statement to be made. They offer the same protection against a bad product/process passing. They are all equivalent from the customer/regulatory point of view. They differ with respect to their sample sizes and their chances of passing a good product/process. The decision of which confidence statement to use should be based on risk and must be justified. The choice of which sampling plan to use for a given confidence statement is a business decision.

- The AQLs in the above table are nonconformance levels that have a 95% chance of passing the sampling plan. They are useful in deciding which sampling plan to use. Historical data can be used to estimate the nonconformance rate and then matched to the AQL. If historical data is not available, data from similar products or processes can be used. If there is no good estimate of the nonconformance rate, stay away from the top of the table. These are the hardest plans for a good product/process to pass.

- The top plan, n=299, a=0 offers the lowest sample size. It minimizes the sample size. However, it also has the lowest AQL. It maximizes the chance a good product/process will fail.

- The double sampling plans have a first sample size not much greater than the top single sampling plan. They offer a good compromise between sample size and the chance of false rejection of a good product/process. They are generally preferred to the single sampling plans.

- Attribute sampling plans are always applicable. For measurable characteristics, they make no assumption about the underlying distribution of the data. However, they have higher sample sizes. For measurable characteristics, variables sampling plans can be used to dramatically lower the sample size.

Variables Sampling Plans

- Appendix F also contains tables of variables sampling plans. There are separate tables for a one-sided specification and a two-sided specification. When the measurements follow the normal distribution or can be transformed to the normal distribution as described in STAT-18, variables sampling plans can be used to dramatically lower the sample size.

Variables Sampling Plans – One-Sided Specification

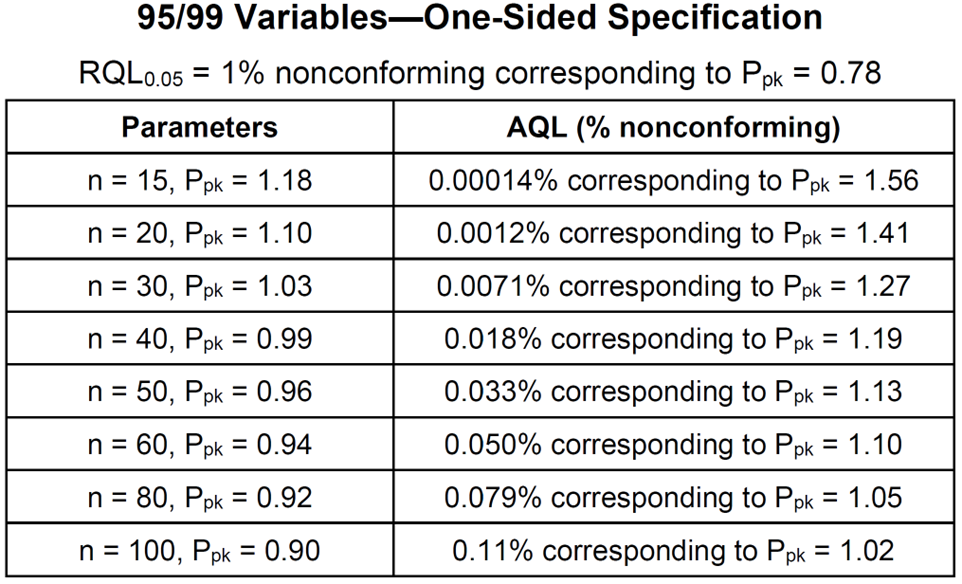

- The one-sided specification table is used for both an upper specification limit only and a lower specification limit only. Appendix F of STAT-12 contains tables like the one shown below for 95%/99% – 95% confidence of more than 99% reliable or conforming.

- All the above sampling plans, if they pass, allow the same confidence statement to be made. They offer the same protection against a bad product/process passing. They are all equivalent from the customer/regulatory point of view. They differ with respect to their sample sizes and their chances of passing a good product/process. The decision of which confidence statement to use should be based on risk and must be justified. The choice of which sampling plan to use for a given confidence statement is a business decision.

- The AQLs in the above table are nonconformance levels that have a 95% chance of passing the sampling plan. They are useful in deciding which sampling plan to use. Historical data can be used to estimate the nonconformance rate and then matched to the AQL. If historical data is not available, data from similar products or processes can be used. If there is no good estimate of the nonconformance rate, stay away from the top of the table. These are the hardest plans for a good product/process to pass.

- Confusing to many is that there are multiple ways of writing the acceptance criterion. STAT-12 gives the acceptance criterion for a lower specification limit as:

![]()

This can be rewritten as

![]()

This form corresponds to that of a lower normal tolerance interval and a k-form variables sampling plan in ANSI/ASQ Z1.9. Normal tolerance intervals, k-form variables sampling plans and Ppk-form variables sampling plans are equivalent procedures for the one-sided specification case.

- Suppose n=30 and one wants to construct a 95%/99% 1-sided lower tolerance interval. From a table of k-factors, k=3.064. Dividing by 3 gives 1.02133333. Rounding up gives Ppk = 1.03 per the above table.

Variables Sampling Plans – Two-Sided Specification

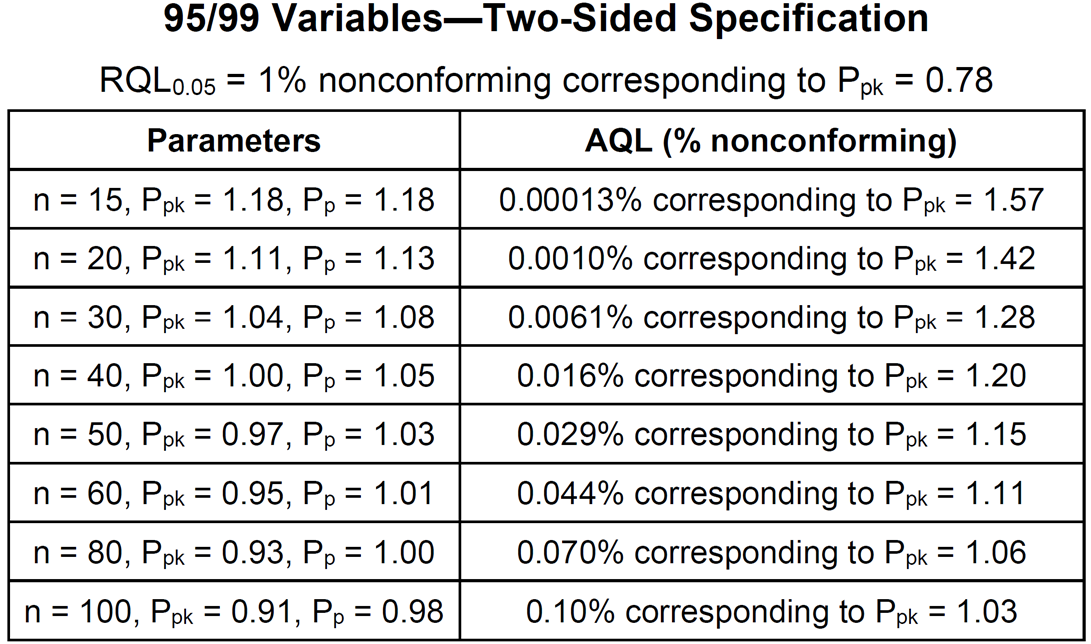

- The two-sided specification table is used when upper and lower specification limits both exist. Appendix F of STAT-12 contains tables like the one shown below for 95%/99% – 95% confidence of more than 99% reliable or conforming.

- All the above sampling plans, if they pass, allow the same confidence statement to be made. They offer the same protection against a bad product/process passing. They are all equivalent from the customer/regulatory point of view. They differ with respect to their sample sizes and their chances of passing a good product/process. The decision of which confidence statement to use should be based on risk and must be justified. The choice of which sampling plan to use for a given confidence statement is a business decision.

- The AQLs in the above table are nonconformance levels that have a 95% chance of passing the sampling plan. They are useful in deciding which sampling plan to use. Historical data can be used to estimate the nonconformance rate and then matched to the AQL. If historical data is not available, data from similar products or processes can be used. If there is no good estimate of the nonconformance rate, stay away from the top of the table. These are the hardest plans for a good product/process to pass.

- Confusing to many is that there are both multiple ways of writing the acceptance criteria and different acceptance criteria used. STAT-12 gives the acceptance criteria as

This can be rewritten as

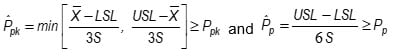

![]()

This form corresponds to the k,MSD-form for 2-sided variables sampling plan in ANSI/ASQ Z1.9. The k,MSD-form and Ppk,Pp-form variables sampling plans are equivalent procedures for the two-sided specification case.

- Suppose n=30 and one wants to construct a 95%/99% 2-sided normal tolerance interval. From a table of k-factors k=3.355. Dividing by 3 gives 1.118333. Rounding up gives Ppk = 1.12. This could be used as the acceptance criterion for Ppk by itself. The Ppk acceptance criterion can be relaxed to 1.04 by adding a Pp acceptance criterion of 1.08 per the above table.

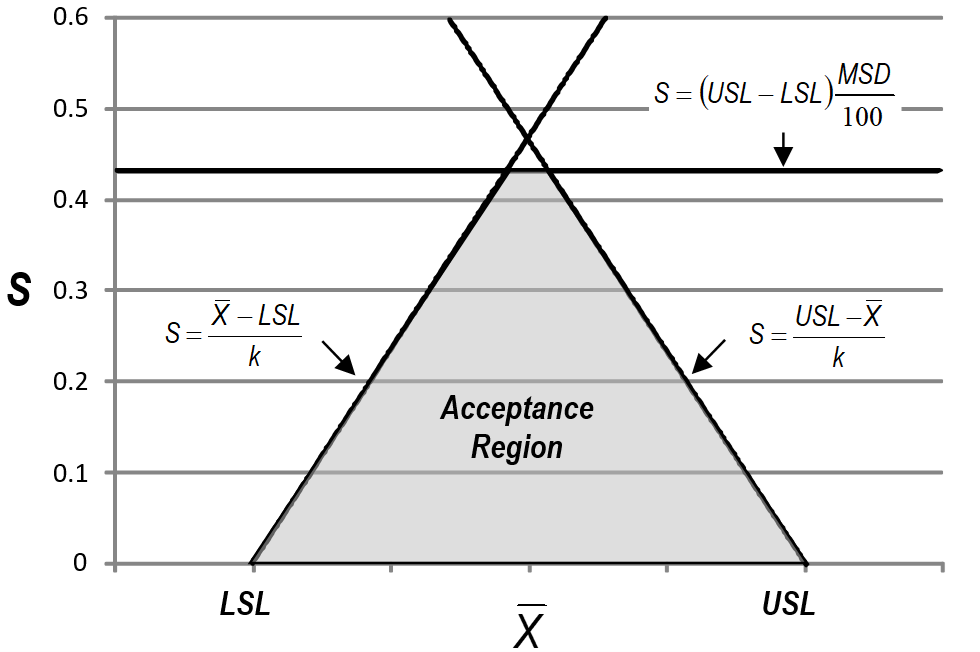

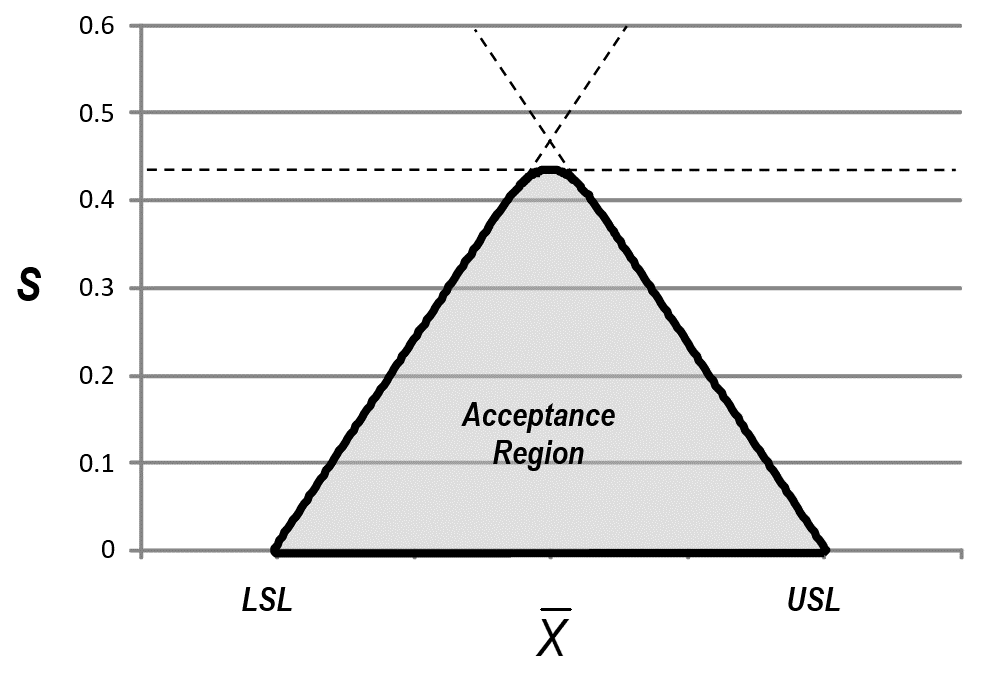

- The difference between a variables sampling plan and a normal tolerance interval can be seen in the plot below. The acceptance criteria for a variables sampling plan is the shaded region below. The acceptance criteria for a 2-sided normal tolerance interval is the triangular region.

- There is also an M-form for 2-sided variables sampling plans with acceptance criterion based on the estimated percent nonconforming

This form is considered the optimal procedure. The acceptance region is shown below. It is more similar to the Ppk,Pp-form acceptance region than the 2-sided normal tolerance interval.

This form is considered the optimal procedure. The acceptance region is shown below. It is more similar to the Ppk,Pp-form acceptance region than the 2-sided normal tolerance interval.

Licenses to the Tables

These tables and all the procedures can be licensed individually or as a group by a company so that they can use them for or as part of their company procedures. This requires paying a 1-time license fee as described at Company Licenses. If your company is using these tables, please make sure they have been properly licensed. Below is a previous version of one of the tables replaced by those in the book of procedures.

Dear,

Can I ask what is the relationship between the RQL and PPK, as I find RQL 0.1%, with corresponds to a Ppk of 1.03 in other document. How to convert between them?

The Ppk values associated with the RQLS in STAT-12 are based on the one-sided case. 3*Ppk corresponds to the number of standard deviations to the nearest spec. Assuming normality, the percent nonconforming is calculated based on the normal distribution in Excel as: =100*NORM.DIST(-3*Ppk,0,1,TRUE)

Hello Taylor,

Your explanation gave me the idea and I have got it. Z bench= (USL-u)/σ=3Ppk. Then I use the Z bench to calculate the defect. Thank you.

It simplifies things if α is fixed at 0.05 and β is fixed at either 0.05 (95% confidence for validation) and 0.1 (90% confidence for validation and for manufacturing). Then you just adjust the AQL and RQL to adjust the protection.

Whenever one selects sampling plans, both the AQL and RQL are important and should be considered. When validating a process, it is important to demonstrate the process meets the AQL used in manufacturing. One would not want to have an AQL of 1% in manufacturing and pass a process running 3% nonconforming in manufacturing. It is common for validation to use an RQL set equal to the AQL in manufacturing. This gives 95% confidence the manufacturing AQL is meet. The validation sampling plan offers far greater protection. This is the difference.

Hello Taylor,

The following steps are my understanding if I want to choose a validation plan after reading your instructions. If I am wrong, please point out. Thanks. I have this question for very long time and hope to solve it under your help.

Step 1: use the historical data or similar process or aligned AQL with customer to define the nonconforming rate, for example, about 1% non-conformance. Then 1% will be used as the RQL when defining the validation sampling plan. Meanwhile, define the β, e.g. 5%.

Step 2: According to the non-conformance rate in step 1, and define the AQL used in validation sampling plan. Any AQL that no more than the non-conformance rate in step 1 can be used. E.g. 0.13% AQL or any rate between 0 to 1%. Meanwhile, define the α risk

After these two steps, we can have the validation sampling plan either from your provided table or use the software to calculate.

It is a two-step process as you describe. To clarify your descriptions:

Step 1: Select Validation RQL (β=5% corresponds to 95% confidence). Select RQL to match the manufacturing AQL, which in turn is based on risk/severity. Passing the plan then demonstrates the manufacturing AQL is met and most lots should pass.

Step 2: Select Validation AQL. Match it to an estimate of expected performance based on historical data, when possible.

Then go to my tables.

Hello

Frankly speaking, sampling is an interesting topic and also a very difficult one. Maybe I cannot understand every aspect. But what you do give me much help. Thanks.

For a sampling size, if we have multiple detection methods for a single failure, can we divide the sample size by detection method? for example for 95%/95% and sample size of 60, there are 60 data points required (not sample size). If we have 2 detection methods, per say, observing damage and discoloration; can we use 30 samples?

When you have multiple defect types of the same severity, the expectation is that the sampling plan be applied to the group. The 95%/95% plan would be to take n=60 samples and accept if there are zero nonconforming units. A nonconforming unit is a unit with one or more nonconformities.

Hi Wayne,

From what I understand, sampling plan using AQL/RQL is ok to be used for “process validation”. But, I am not so sure if it can be used for “design verification/validation”?

There are several sources that advise against the use of AQL/RQL sampling plan for “design verification/validation”, unfortunately I couldn’t grasp the rationale. May I know your thoughts?

Thanks.

During design verification, you are trying to demonstrate the entire design (range for the design outputs) works. The best way to demonstrate this is to test the limits of the design space. This can be done by worst-case testing. It can also be done by analysis by performing a worst-case tolerance analysis. The issue with a sampling plan is the samples may not push the limits of the design outputs.

for design verification studies, as I understand it this methodology supports the sample size justification, but how does it affect the acceptance of the study itself? meaning, how does this compare to statistical Tolerance Limits analysis or bionomical probability? or would Ppk/Pp replace the need?

The table includes the acceptance criteria along with the sample size. Only sampling plans for attribute data are covered here based on the binomial. The book also contains tables for variables data based on Ppk/Pp, which are equivalent to normal tolerance intervals through the relationship k = 3 Ppk.

Question regarding Table F3 of STAT-12:

for a single 90/70 attribute plan with RQL0.10 = 30% non conforming. What would a and AQL % be for a sample size of 50?

Thanks,

JB

You can use Sampling Plan Analyzerr to answer this question. Enter a single sampling plan with n=50 and increase the accept number until RQL0.10 is just below 30%. The answer is n=10 and AQL = 12.86.

Hello Dr. Taylor,

I am trying to validate an attribute test method. Using your tables for a a secondary method, single sampling plan, at 90% confidence and 95% reliability it directs me to n-45, a =0. AQL =0.11% and LTPD0.1 = 5%. Firstly, it suggests separate sampling plans for alpha and beta errors. Alpha must be no less than 40% of samples. Does that mean I must run two validations with 45 samples each or two validations with 18 for alpha and 27 for beta sampling plans? Secondly, a=0 means no defects allowed, but how do I interpret the AQL 0.11% and LTPD 5%? Thanks for your help

There are two errors that can be made: (1) falsely accepting a nonconforming unit and (2) falsely rejecting a conforming unit. The 90%/95% plan n=45, a=0 means to inspect n=45 nonconforming units and accept if there are a=0 false acceptances. My book provides alternative 90%/95% plans including double sampling plans that decrease the chance of a false failure of the TMV.

My book recommends not having an acceptance criterion for false rejections. Otherwise, a separate sampling plan is required for false rejections.

The 5% in LTPD0.1 = 5% is a 5% false acceptance rate meaning 95% reliability. The 0.1 is a 10% chance of an incorrect decision meaning 90% confidence. LTPD0.1 = 5% is the same as 90%/95%. This is an unacceptable false acceptance rate the sampling plan is designed to reject. The AQL is a false acceptance rate the sampling plan is designed to accept. 0.11% means 99.89% of nonconforming units are rejected. This is how good the inspection must be to be assured of passing the TMV.

Hi! The company that I work for adquired your book and I was looking into one of the acceptance criteria. I just wanted to understand the relationship between the number of samples with the required ppk for each confidence/reliability. For example, how is it that we can say it is statistically valid that for a sample size of 15, with 90% reliability and 95% confidence, we require a ppk of 0.69?

n=15, Ppk=0.69 is a variables acceptance sampling plan. The more common form for a variables sampling plan is n=15 and k=2.07=3 Ppk. ANSI Z1.9 contains such plans and information can be found on calculating the OC curve. This is also the same as a normal tolerance interval with parameters n and k with an acceptance criteria of passing if the tolerance interval is inside the spec limits. They are all identical procedures.

The article at variation.com/confidence-statements-associated-with-sampling-plans/ shows how the OC curve relates to the confidence statement. Your plan has RQL0.05 = 10%.

Hello Dr. Taylor,

For design verification, when establishing a variables single sampling plans for proportions, n and Ppk are two parameters considered for a one-sided specification. In Appendix B of STAT-12 there is pass criteria given for a one-sided-only lower specification limit (LSL) involving Ppk. How does this correlate to the tables in Appendix F? For example, for “95/97 Variables – One-Sided Specification” table multiple sample sizes and Ppk values are given. How do I select the appropriate parameters to compare to the pass criteria given in Appendix B? Do I start with the smallest sample size (n=15) shown?

The 95/97 table in Appendix F and STAT-12 has two columns. The fist column is the Parameters column. It provides the sample size (n) and acceptance criteria (Ppk) of eight different sampling plans that allows the 95/97 statement to be made if they pass. The second column is the AQL column. This column is used to decide on which plan to use. The AQL column tells you how high the actual Ppk must be to be assured of passing the sampling plan. As an example, suppose previous data estimates the Ppk is 0.98. Matching this to the AQL column results in the sampling plan n=40 and Ppk=0.81.

Hello Taylor,

I tried using Minitab’s Variable acceptance sampling plan feature to generate a sampling plan for double sided specification and single sided specification. But the sampling plan i.e number of units to be tested and K- factor is same for both single side and double side specification. The only difference is that i have an additional requirement of passing the MSD. Is it ok to use ?

The n and k given by Minitab for variables sampling plans are correct for 1-sided sampling plans. They should not be used for 2-sided sampling plans. The additional MSD requirement is an assumption for the true standard deviation and is not part of the acceptance criteria for individual lots. My book Statistical Procedures for the Medical Device Industry gives 2-sided variables plans with acceptance criteria for MSD using the estimated lot standard deviation. This results in different n and k values. The other option is to use the n and k values for a 2-sided normal tolerance interval with no criteria on MSD.

Hi taylor,

Could you show how the calculation is done to relax the ppk from 1.12 to 1.04 with the pp included?

In the graphic below, MSD corresponds to Pp and k corresponds to Ppk. The shaded region is the acceptance region for a 2-sided Variables Sampling Plan.

If MSD is increased high enough the acceptance region becomes a triangle and the Pp acceptance criterion is dropped. The k-value in this case is the k-value for a 2-sided normal tolerance interval. As MSD is decreased the top end of the triangle is cut off creating a Pp acceptance criterion. This allows the k value (Ppk) to be decreased to widen the interval for the average.

The probability of acceptance associated with the gray region is calculated by integrating over over the sample standard deviation based on the Chi-square distribution and the sample average based on the normal distribution.

Thanks! btw how do you determine the Pp value for the selected sample size at X confidence and X reliability?

The short answer is that Pp is selected to make the acceptance region in Figure 5.1 as close as possible to the acceptance region for the M form in Figure 5.2. The M region in Figure 5.32 is all combinations of the average and standard deviation resulting in an estimated percent nonconforming of 1% or less.

Pp is calculated based on n and Ppk. Start with Ppk. Determine k = 3 Ppk. Determine the value of M in Figure 5.2 that has the same sides as the k-value in Figure 5.1. Determine the Maximum Standard Deviation (MSD) from the M acceptance region in Figure 5.2. Use this to determine the corresponding value of Pp in Figure 5.1.

Thanks for you help so far but how do you get the worse case percentage non conforming in M based on confidence and reliability for 2 sided spec? Could you show one set of calculation for 95/99 and N=30?

Software is required. Determining M requires numerically solving for M in OC(30,M,1%,f) = 0.05 where f is the fraction in the lower tail. OC(30,M,1%,f) must also be numerically solved as it is a triple integral.

To determine M using Sampling Plan Analyzer, click Enter New Plan and select Variables Sampling Plan. Click OK to display the dialog box below.

Enter 30 for the sample size and use trial and error to determine M. The result is shown below – M = 0.036%.

Dear Dr,

Found this statement here for 95%/99% Sampling Plan – “95% confidence of more than 99% conformance means there is a 95% chance of REJECTING a 99%conforming product/process. 99% conforming is therefore an unacceptable level of quality designed to fail”

Is the term “rejecting” correct in this context? Please have a look.

Having a sampling plan with a 95% confidence level and a 99% conformance rate means that there is a 95% chance of ACCEPTING OR 5% CHANCE OF REJECTING a 99%conforming product/process. 99% conforming is therefore an unacceptable level of quality designed to fail. Could you check on this comprehension as well?

Thanks

The statement in the article is correct: “95% confidence of more than 99% conformance means there is a 95% chance of rejecting a 99% conforming product/process. 99% conforming is therefore an unacceptable level of quality designed to fail.” The logic is: if 99% conforming fails, then passing means the process must be better than 99% conforming.

The OC curve of one of the plans in the table, n=299, a=0, is shown below. There is a 5% chance of passing at 1% nonconforming, corresponding to a 95% chance of failing.

In reading the discussion above I have a question on the following statement:

Suppose n=30 and one wants to construct a 95%/99% 2-sided normal tolerance interval. From a table of k-factors k=3.355. Dividing by 3 gives 1.118333. Rounding up gives Ppk = 1.12. This could be used as the acceptance criterion for Ppk by itself. The Ppk acceptance criterion can be relaxed to 1.04 by adding a Pp acceptance criterion of 1.08 per the above table.

Ive gong through the K table and found the PPKs by diving by 3. However how can you relax the ppk values, is there a formula for that?

Regards & thanks

The formulas are complex (triple integral) and must be solved numerically. They have been implemented in Sampling Plan Analyzer

In relation to my question on f relaxing the PPK, I understand that the theory is above, but would it be possible to show how one of the line items from the 95/99 Variables two sided specification is calculated . The pone already mentioned n=30 PPK 1.04 and pp 1.08. perhaps.

Thank you,

The OC curve for a 2-sided variables sampling plan not only is a function of the fraction nonconforming (p) but also depends on the fraction of nonconforming units in the lower tail (fUSL). For example if p = 0.01 and fUSL=0.5 it means the fraction nonconforming in the lower tail is 0.005 and the fraction nonconforming in the upper tail is also 0.005. There are a series of OC curves for different fUSL as shown below.

The formula for the OC curve is based on p and fUSL is:

This allows Sampling Plan Analyzer to evaluate the OC curve. To generate the table, the programs searches for different Ppk and Pp values to obtain the desired OC curve using the worst-case of fUSL.

Hi Taylor,

Going through the Tables and comments for variables 1-sided and 2-sided plan, I understand the parameters column and the relationship with the n, ppk and pp, as you explained the relaxation of ppk addidng the pp.

However, when it goes to the AQL and LTPD, I can’t understand where those Ppk came from and the relation with the “parameters” column. Could you explain it, please?

The Ppk vales are calculated from the AQL and LTPD rather than the parameters. For the AQL as a percent, Ppk = -NORM.INV(AQL/100,0,1)/3. For the Ppk associated with the LTPD change AQL to LTPD in the formula.

Hi Wayne,

If you have multiple lots with potentially some defects per lot and you want to gain confidence (with attribute data only) in the overall lot population defect rate what is the best method? How can I calculate my confidence for the population of lots for example?

Can you explain please? Thanks

You can estimate the process performance based on the total defects found and total number of samples tested. Appendix D of Stat-12 of my book of procedures describes how to calculate a confidence statement by a variety of methods including using the spreadsheet that accompanies the book.

I’m confused about how the null and alternative hypothesis are stated when acceptance sampling by variables is used for product design verification, along with the classical descriptions for AQl/alpha risk and RQL/beta risk. For example, the null is supposed to represent the status quo (no evidence of, no effect, etc.) while the alternative is supposed to represent the claim you are seeking to make. So, given the purpose and definition of design verification, and generically speaking, the null would be “there is no objective evidence that the design output meets the design input requirement”, and the alternative would be “there is objective evidence that the design output meets the design input requirement”. But then classical alpha and beta risk seem to have their meanings swapped. For example, given the expressions of the null and alternative I’ve just provided, if I were to commit a type 1 error with a design verification test, I would be rejecting the null when it it actually true or concluding there is objective evidence that the design output meets the design input requirement when there really isn’t. In effect, my test passes a bad design—but this would be how type 2 error is classically defined. Where am I going wrong?

Take a t-test to see if two means are equal. The Null Hypothesis is they are equal and the Alternative Hypothesis is they are different. If the p-value is 0.05 or less, you can state with 95% confidence the means are different. Otherwise, all you can state is that no significant difference was found. This is appropriate if you are trying to prove there is a significant effect.

But what if you are trying to prove they are equivalent? Then, the two hypotheses should be reversed. For a sampling plan, one can think of the Null Hypothesis as the percent nonconforming exceeds the RQL and the Alternative is the percent nonconforming is less than the RQL. Alpha, associated with the Null Hypothesis, then should apply to the RQL.

In my book I take a much simpler approach. I define the probability of acceptance at the AQL as 0.95 and the probabiity of acceptance at the RQL as 0.05 or 0.1. I avoid the alpha and beta altogether to prevent this type of confusion. The only time you will find alpha mentioned is (1) as a parameter of the Beta and Gamma distributions and (2) when preforming equivalency testing using Minitab when it asks for alpha.

I believe this same logic applies to attribute sampling plans. In that context, the appropriate hypothesis setup would be: H₀: Our process is not capable; H₁: Our process is capable. Under this framework, it appears that the traditional interpretation of alpha and beta risks is reversed. However, this inversion isn’t incorrect, it simply reflects the validation mindset, where the burden of proof lies in demonstrating capability, correct?

For validation you are proving the process is capable (H₁, below RQL). This is akin to an equivalency test rather than the classical hypothesis test in that it reverses the two hypothesis.

Hello Dr. Taylor,

Can you please explain the Confidence and Reliability levels for a Ppk of 1.33 and 1.67, respectively? My understanding of the AIAG was that a Ppk of 1.33 reflected a 95% confidence of 99% reliability, and a Ppk of 1.67 was 99/99 . Based on the tables in STAT-12, my understanding was incorrect (the 95/95 two-sided Ppk is 0.76).

The protection also depends on the sample size.

n=30, Ppk=1.33 is 95/99.9 with an AQL of 0.0000524% nonconforming or 0.5 defects per million

n=100, Ppk=1.33 is 95/99.98 with an AQL of 0.0003749% nonconforming or 3.7 defects per million

n=30, Ppk=1.67 is 95/99.995 with an AQL of 0.000000050% nonconforming or 0.5 defects per billion

n=100, Ppk=1.67 is 95/99.9995 with an AQL of 0.0000010% nonconforming or 10 defects per billion

Dear Dr. Taylor,

The table LTPD-based Variable Sampling Plan is limited to n=100. My data is over than that, let’s say n=200. Can I use the MSD/ k/ Ppk/ Pp value of the n=100 (with same Confident Level and Reliability). Or is there any formula to calculate the MSD/ k/ Ppk/ Ppk corresponding to n=200? Please advise.

Thanks

You can use the acceptance values for a smaller sample size and the protection will be at least as good as the specified Confidence/Reliabilty statement. However, there is a risk of false rejection.

Acceptance criteria for the larger sample size can be determined using the software package Sampling Plan Analyzer. It is a trial and error process where you enter the variables sampling plan’s sample size and an estimated k-value. Then play with the k-value until you get the desired LTPD. You then have to covert k and MSD to Ppk and Pp as described at STAT-12: Verification/Validation Sampling Plans for Proportion Nonconforming.

Dear Dr. Taylor,

I would better understand two aspects:

1) 95/99 ATTRIBUTE Table – Single sampling plan -> numbers that I see (299,0 ; 473, 1 ; 628,2…) are related to batch size?

2) where I can find tables with other Conforming percentage? (i.e. 95/80 or 95/90)

Thank you,

Andrea

The sample sizes and accept numbers are independent of the batch or populations size. See The Effect of Lot Size.

Tables for different confidece statementsw can be found in STAT-12: Verification/Validation Sampling Plans for Proportion Nonconforming of the book Statistical Procedures for the Medical Device Industry. It contains tables for Table F1: Sampling Plans for 50%, 60%, 70%, 75%, 80%, 85%, 90%, 93.5%, 95%, 96%, 97%, 97.5%, 98.5%, 99%, 99.35%, 99.5%, 99.6%, 99.7%, 99.75%, 99.85%, 99.9%, 99.935%, 99.95%, 99.96%, 99.97%, 99.975%, 99.985%, 99.99% percent conforming.

Dear Dr. Taylor,

My colleague referred me to ISO 16269-6. I found the similarities with your STAT-12, Table Fs.

ISO 16269-6 explains that it is possible to determine the sample size necessary to achieve at least 100(1 −α) % confidence that at least 100p % of the population are lying between the vth smallest observation (i.e., order statistic x(v)) and the wth largest observation (i.e., order statistic x(n–w+1)).

Appreciate any thoughts you may provide.

The nonparametric tolerance intervals in ISO 16269-6 and attribute sampling plans in STAT-12 are both nonparametric procedure and are related but not identical. First attribute sampling plans don’t require there to be an underlying measurable characteristic that can be used to construct a nonparametric tolerance interval.

When there is an underlying measurement with specification limits, the nonparametric tolerance intervals can be converted into sampling plans but adding an acceptance criteria that the tolerance interval be within the specification limits. This allows the same confidence/reliability statement to be made relative to the spec limits. There is a difference in terms of what will pass. A nonparametric tolerance interval using x(2) and x(n-1) passes if no more than one units is below the lower spec limit and no more than one unit is outside the upper spec limit. The attribute plan n, a=2 passes if no more than two units are outside the spec limits but there could be two units below the lower spec, two above the upper spec or one outside of each spec. Attribute sampling plans are slightly more efficient when making confidence/reliability statement relative to spec limits.

When you are unable to verify the assumption of small between-lot variation in your PQ using only 3 lots, do you have a recommendation on how to determine the number of additional lots that should be tested? Does the number of additional lots depend on the magnitude of the between-lot standard deviation compared to the total standard deviation, for example:

Between-Lot Std. Dev. 50%, 7 lots

A common approach is to increase the number of lots to 10.

A more rigorous approach is to use Satterthwaite’s approximations to the effective degrees of freedom/sample size. The effective sample size depends on the number of lots, number of samples per lot and the percent of the variation due to lots. As an example, assume the are 3 lots and 10 samples per lot. If 0% of the variation is between lots the effective sample size is 30 = 3 * 10. If 100% of the variation is between lots the effective sample size is 3. In all other cases it is between 3 and 30. The effective sample size can be use to calculate a normal tolerance interval that accounts for the between lot variation.

This approach can be used to evaluate different numbers of lots and samples per lot to determine the best combination of sample sizes to use. I would recommend consulting a Statistician.

Dear Taylor:

Please accept my respect.

3+ years ago i knew you in J&J’s validation WI. Recently we talk about you frequently when co-work with BSC. You are popular in China~

My question is:

Which acceptance criteria is easier to achieve:high ppk or lower Ppk&Pp

B&R

Variables sampling plans for for two-sided specifications have several differs forms:

The k/Ppk form, which is based of normal tolerance limits.

The Ppk-Pp form in my book Statistical Procedures for the Medical Device Industry

The M form in ANSI Z1.9

All these approaches are valid. See earlier responses explaining differences.

The Ppk-Pp form is is slightly for efficient than the k/Ppk form for a given Confidence/Reliability statement. This means it is slightly more likely to pass a good process/design.

Dear Taylor:

Thanks for your professional reply,

Ppk-Pp method is more scientific and k/Ppk method is more strict

Best wishes for you and your family

Jonathan